Aug 15, 2018

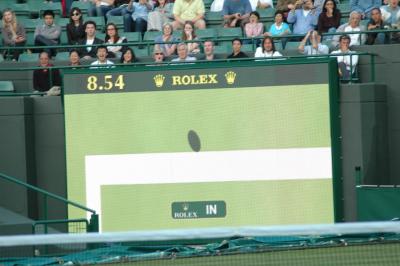

From the introduction of the “1st and Ten” line in NFL broadcasts in 1998 to the use of the Hawk-Eye system for line calls in tennis and cricket, sports viewers now expect to see graphics on their screens to help explain the action.

Irfan Essa, director of the Machine Learning Center at Georgia Tech (ML@GT), recently gave an invited talk, Computational Video for Sports: Challenges for Large-Scale Video Analysis, detailing why technology areas such as computer vision and augmented reality are so prevalent in sports broadcasts. During his talk, which took place at the 4th International Workshop on Computer Vision in Sports (CVsports) in June, he also discussed the challenges that computer vision scientists face when creating technology to improve the sports industry.

One such challenge is computer vision and machine learning being used to create models that can identify common sports scenes and help write news captions. But what humans easily interpret is often not so simple for machines.

When analyzing a photo of Georgia Tech’s head football coach Paul Johnson getting a celebratory Gatorade bath on the field, a computer algorithm misidentified a camera in the scene as a hair dryer, and the resulting caption read “Man taking shower while others watch.”

Improving computer vision so that it is able to better account for things like context and have better training models is one of the next steps in the field.

Scientists are also working on how to make this type of technology available for use on lower-quality video. Current computer vision techniques work well for broadcast-quality video in part because of the detail available in high-definition, but researchers would like to make the techniques accurate and cost-effective for lower-quality video so that high school sports can also take advantage of their benefits.

By 2019, video content is expected to account for 80 percent of the world’s total web content. Computer vision’s ability to understand context, analyze lower-quality video content, and establish better metrics to analyze the data are all key next steps for this segment of computer science, according to Essa.

The workshop took place as part of the CVF/IEEE Computer Vision and Pattern Recognition (CVPR) conference in Salt Lake City, Utah. Georgia Tech researchers and alumni presented work in computer vision and were among 6,000 attendees at the conference. Throughout the week, attendees presented their latest research papers through oral presentations, spotlights, poster sessions, and workshops.