The IEEE International Conference on Computer Vision (ICCV) takes place biennially and focuses on the interdisciplinary scientific field of computer vision, which deals with how computers can gain high-level understanding from digital images or videos.

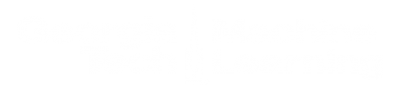

ICCV 2021, Oct. 11-17, includes work from Georgia Tech researchers in the areas of Vision + Language; Medical, biological, and cell microscopy; Video analysis and understanding; Transfer/Low-shot/Semi/Unsupervised Learning; and Vision for robotics and autonomous vehicles.

Georgia Tech Authors

Harsh Agrawal

Harsh Agrawal- Jonathan C Balloch

- Dhruv Batra

- Vincent Cartillier

- Prithvijit Chattopadhyay

- Judy Hoffman

- Deeksha Kartik

- Shivam Khare

- Zsolt Kira

- Abhinav Moudgil

- Yash Mukund Kant

- Devi Parikh

- Viraj Prabhu

- Fiona K Ryan

- James S Smith

- Erik Wijmans

- Joel Ye

Researcher Insights

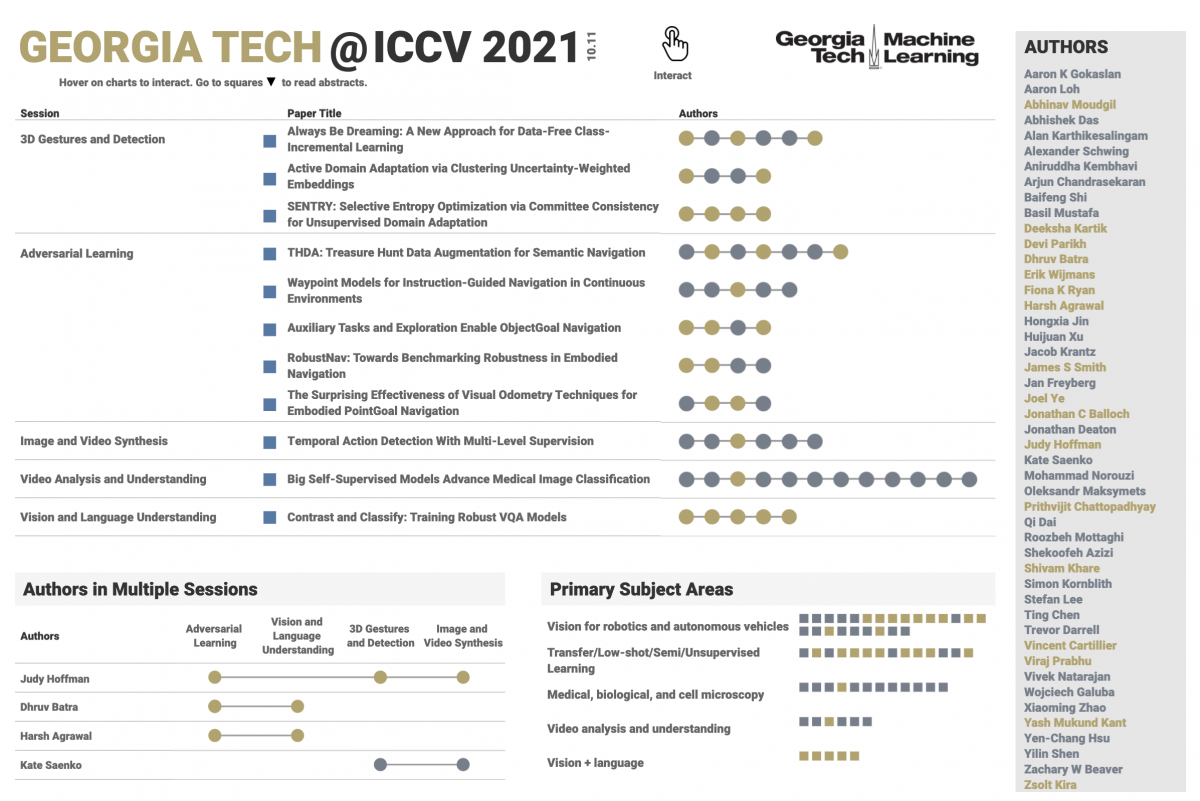

Dhruv Batra, Associate Professor

School of Interactive Computing

ICCV RESEARCH AREAS: Vision + language, Vision for robotics and autonomous vehicles, Vision + other modalities

If you had to pick, which of your research results accepted at ICCV would you want to highlight?

I would pick Joel, Abhishek, and Erik’s result on ObjectNav. They made a significant advance toward virtual robots (or embodied agents in 3D simulation) that can search for objects in new environments. They also won the ObjectNav challenge at CVPR earlier this year.

What recent advancements or new challenges have you seen in the research fields that you are involved in?

I am pretty excited about the release of fast 3D simulators (like AI Habitat) and by the release of large-scale 3D datasets like the Habitat-Matterport 3D dataset, which we released earlier this year. More details on the latter are here.

If you focused on one social application of your work this year, what would it be?

I didn’t. But I think assistive robots and egocentric AI assistant (e.g. operating on a user’s smart glasses) have a lot of potential for social impact.

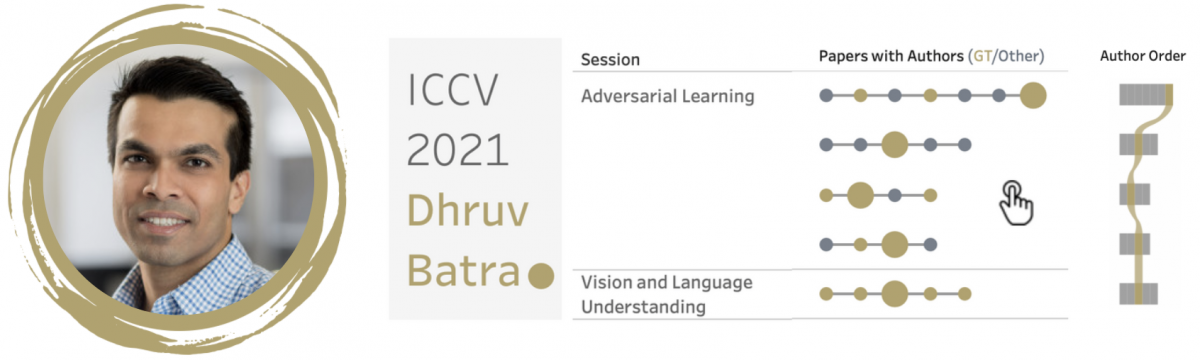

Judy Hoffman, Assistant Professor

School of Interactive Computing

ICCV RESEARCH AREAS: Transfer/Low-shot/Semi/Unsupervised Learning, Representation learning, Video analysis and understanding, Action and behavior recognition & Motion and tracking, Vision for robotics and autonomous vehicles, Datasets and evaluation

If you had to pick, which of your research results accepted at ICCV would you want to highlight?

SENTRY is the first in a line of new domain adaptation methods from our lab that selectively adapt a vision model only on reliable observations. Visual appearance can naturally change in many ways (times of day, weather patterns etc.) that make it difficult or even impossible to prepare for all circumstances. Adaptation specializes vision models in response to new observations but can unintentionally degrade performance if the new observations are noisy or ambiguous. SENTRY automatically identifies and filters these problematic observations to produce more reliable adaptation that can be deployed with less manual oversight.

What recent advancements or new challenges have you seen in the research fields that you are involved in?

As our vision systems are more frequently relied upon to make decisions that impact people’s lives, it is imperative that our community focuses on producing reliable and equitable systems. My lab addresses this challenge through new algorithms and analysis tools to discover and mitigate biases in data used for learning and in the resulting learned models.

If you focused on one social application of your work this year, what would it be?

My primary concern is making the fundamental technology reliable across the variety of applications where vision systems are used today. To this end, my lab is currently studying a variety of applications, including road scene recognition, aerial recognition for autonomous flight, indoor robotic navigation, and medical diagnostics. I find that working on multiple applications simultaneously is the best way to illuminate flaws in the core technology.

Zsolt Kira, Assistant Professor

School of Interactive Computing

ICCV RESEARCH AREAS: Transfer/Low-shot/Semi/Unsupervised Learning, Recognition and classification

If you had to pick, which of your research results accepted at ICCV would you want to highlight?

Our work on data-free continual learning is really exciting. It tackles the problem of how neural networks can learn new tasks, without forgetting old ones – a problem called catastrophic forgetting. Existing methods largely get around this problem by storing past data, which has a lot of negative implications such as privacy (you may not want the companies deploying these models to have to store raw data containing faces and people). Our method can reduce catastrophic forgetting significantly without storing any data, by essentially generating data from scratch from the learned model (sometimes referred to as ‘dreaming’). It can even do better than some methods that store data, which opens up a lot of possibilities for having artificial intelligence that continuously adapts without having to store past data.

What recent advancements or new challenges have you seen in the research fields that you are involved in?

There have been amazing strides across all sorts of challenges in machine learning and artificial intelligence, including reducing the amount of labeled data needed, reducing catastrophic forgetting, estimating what the models don’t know, and more. This enables a host of applications where annotating data is very laborious and costly or adaptation to change is necessary. However, a lot of these advancements have been on curated datasets from the web, and applying them to real-world things like robots is still a challenge as the problems become much more messy. My group is interested in scaling up these algorithms to larger-scale real-world problems and datasets, including for use in robotics such as in self-driving cars.

If you focused on one social application of your work this year, what would it be?

Allowing AI models to continuously learn new things has wide-ranging applications, though our focus on not storing data is especially applicable to applications where privacy might be an issue. For example, in healthcare settings we might have AI methods for detecting various conditions such as diseases, sepsis, etc. and you would want to be able to share these models across different hospitals. Since the data will differ widely (different hospitals use different equipment, have different types of patients, etc.) the models will have to be re-trained on new data but throwing away what was learned in previous settings is wasteful. Our method allows one to do that without storing potentially sensitive patient data.

VIDEOS: Computer Vision in Focus

ObjectGoal Navigation (ObjectNav) is an embodied task wherein agents are to navigate to an object instance in an unseen environment. New work from Georgia Tech shows agents achieving 24.5% success and 8.1% SPL, a 37% and 8% relative improvement over prior state-of-the-art, respectively, on the Habitat ObjectNav Challenge. Learn More | Video: Joel Ye

Active Domain Adaptation via Clustering Uncertainty-weighted Embeddings

Always Be Dreaming ICCV2021 Highlight

Auxiliary Tasks and Exploration Enable ObjectNav

RobustNav: Towards Benchmarking Robustness in Embodied Navigation

SENTRY: Selective Entropy Optimization via Committee Consistency for Unsupervised Domain Adaptation

THDA: Treasure Hunt Data Augmentation for Semantic Navigation (ICCV2021)

Waypoint Models for Instruction-guided Navigation in Continuous Environments

Accepted Papers

Active Domain Adaptation via Clustering Uncertainty-Weighted Embeddings

Viraj Prabhu (Georgia Tech)*; Arjun Chandrasekaran (Max Planck Institute for Intelligent Systems); Kate Saenko (Boston University); Judy Hoffman (Georgia Tech)

Generalizing deep neural networks to new target domains is critical to their real-world utility. In practice, it may be feasible to get some target data labeled, but to be cost-effective it is desirable to select a maximally-informative subset via active learning (AL). We study the problem of AL under a domain shift, called Active Domain Adaptation (Active DA). We demonstrate how existing AL approaches based solely on model uncertainty or diversity sampling are less effective for Active DA. We propose Clustering Uncertainty-weighted Embeddings (CLUE), a novel label acquisition strategy for Active DA that performs uncertainty-weighted clustering to identify target instances for labeling that are both uncertain under the model and diverse in feature space. CLUE consistently outperforms competing label acquisition strategies for Active DA and AL across learning settings on 6 diverse domain shifts for image classification.

Always Be Dreaming: A New Approach for Data-Free Class-Incremental Learning

James S Smith (Georgia Institute of Technology)*; Yen-Chang Hsu (Samsung Research America); Jonathan C Balloch (Georgia Institute of Technology); Yilin Shen (Samsung Research America); Hongxia Jin (Samsung Research America); Zsolt Kira (Georgia Institute of Technology)

Modern computer vision applications suffer from catastrophic forgetting when incrementally learning new concepts over time. The most successful approaches to alleviate this forgetting require extensive replay of previously seen data, which is problematic when memory constraints or data legality concerns exist. In this work, we consider the high-impact problem of Data-Free Class-Incremental Learning (DFCIL), where an incremental learning agent must learn new concepts over time without storing generators or training data from past tasks. One approach for DFCIL is to replay synthetic images produced by inverting a frozen copy of the learner's classification model, but we show this approach fails for common class-incremental benchmarks when using standard distillation strategies. We diagnose the cause of this failure and propose a novel incremental distillation strategy for DFCIL, contributing a modified cross-entropy training and importance-weighted feature distillation, and show that our method results in up to a 25.1% increase in final task accuracy (absolute difference) compared to SOTA DFCIL methods for common class-incremental benchmarks. Our method even outperforms several standard replay based methods which store a coreset of images.

Auxiliary Tasks and Exploration Enable ObjectGoal Navigation

Joel Ye (Georgia Institute of Technology)*; Dhruv Batra (Georgia Tech & Facebook AI Research); Abhishek Das (Facebook AI Research); Erik Wijmans (Georgia Tech)

ObjectGoal Navigation (ObjectNav) is an embodied task wherein agents are to navigate to an object instance in an unseen environment. Prior works have shown that end-to-end ObjectNav agents that use vanilla visual and recurrent modules, e.g. a CNN+RNN, perform poorly due to overfitting and sample inefficiency. This has motivated current state-of-the-art methods to mix analytic and learned components and operate on explicit spatial maps of the environment. We instead re-enable a generic learned agent by adding auxiliary learning tasks and an exploration reward. Our agents achieve 24.5% success and 8.1% SPL, a 37% and 8% relative improvement over prior state-of-the-art, respectively, on the Habitat ObjectNav Challenge. From our analysis, we propose that agents will act to simplify their visual inputs so as to smooth their RNN dynamics, and that auxiliary tasks reduce overfitting by minimizing effective RNN dimensionality; i.e. a performant ObjectNav agent that must maintain coherent plans over long horizons does so by learning smooth, low-dimensional recurrent dynamics.

Big Self-Supervised Models Advance Medical Image Classification

Shekoofeh Azizi (Google)*; Basil Mustafa (Google); Fiona K Ryan (Georgia Institute of Technology); Zachary W Beaver (Google); Jan Freyberg (Google Health); Jonathan Deaton (Google); Aaron Loh (Google); Alan Karthikesalingam (Google Health); Simon Kornblith (Google Brain); Ting Chen (Google); Vivek Natarajan (Google); Mohammad Norouzi (Google Research, Brain Team)

Self-supervised pretraining followed by supervised fine-tuning has seen success in image recognition, especially when labeled examples are scarce, but has received limited attention in medical image analysis. This paper studies the effectiveness of self-supervised learning as a pretraining strategy for medical image classification. We conduct experiments on two distinct tasks: dermatology condition classification from digital camera images and multi-label chest X-ray classification, and demonstrate that self-supervised learning on ImageNet, followed by additional self-supervised learning on unlabeled domain-specific medical images significantly improves the accuracy of medical image classifiers.We introduce a novel Multi-Instance Contrastive Learning (MICLe) method that uses multiple images of the underlying pathology per patient case, when available, to construct more informative positive pairs for self-supervised learning. Combining our contributions, we achieve an improvement of 6.7% in top-1 accuracy and an improvement of 1.1% in mean AUC on dermatology and chest X-ray classification respectively, outperforming strong supervised baselines pretrained on ImageNet. In addition, we show that big self-supervised models are robust to distribution shift and can learn efficiently with a small number of labeled medical images.

Contrast and Classify: Training Robust VQA Models

Yash Mukund Kant (Georgia Institute of Technology)*; Abhinav Moudgil (Georgia Institute of Technology); Dhruv Batra (Georgia Tech & Facebook AI Research); Devi Parikh (Georgia Tech & Facebook AI Research); Harsh Agrawal (Georgia Institute of Technology)

Recent Visual Question Answering (VQA) models have shown impressive performance on the VQA benchmark but remain sensitive to small linguistic variations in input questions. Existing approaches address this by augmenting the dataset with question paraphrases from visual question generation models or adversarial perturbations. These approaches use the combined data to learn an answer classifier by minimizing the standard cross-entropy loss. To more effectively leverage augmented data, we build on the recent success in contrastive learning. We propose a novel training paradigm (ConClaT) that optimizes both cross-entropy and contrastive losses. The contrastive loss encourages representations to be robust to linguistic variations in questions while the cross-entropy loss preserves the discriminative power of representations for answer prediction. We find that optimizing both losses -- either alternately or jointly -- is key to effective training. On the VQA-Rephrasings benchmark, which measures the VQA model's answer consistency across human paraphrases of a question, ConClaT improves Consensus Score by 1.63% over an improved baseline. In addition, on the standard VQA 2.0 benchmark, we improve the VQA accuracy by 0.78% overall. We also show that ConClaT is agnostic to the type of data-augmentation strategy used.

RobustNav: Towards Benchmarking Robustness in Embodied Navigation

Prithvijit Chattopadhyay (Georgia Institute of Technology)*; Judy Hoffman (Georgia Tech); Roozbeh Mottaghi (Allen Institute for AI); Aniruddha Kembhavi (Allen Institute for Artificial Intelligence)

As an attempt towards assessing the robustness of embodied navigation agents, we propose RobustNav, a framework to quantify the performance of embodied navigation agents when exposed to a wide variety of visual-- affecting RGB inputs -- and dynamics -- affecting transition dynamics -- corruptions. Most recent efforts in visual navigation have typically focused on generalizing to novel target environments with similar appearance and dynamics characteristics. With RobustNav, we find that some standard embodied navigation agents significantly underperform (or fail) in the presence of visual or dynamics corruptions. We systematically analyze the kind of idiosyncrasies that emerge in the behavior of such agents when operating under corruptions. Finally, for visual corruptions in RobustNav, we show that while standard techniques to improve robustness such as data-augmentation and self-supervised adaptation offer some zero-shot resistance and improvements in navigation performance, there is still a long way to go in terms of recovering lost performance relative to clean “non-corrupt” settings, warranting more research in this direction. Our code is available at https://github.com/allenai/robustnav.

SENTRY: Selective Entropy Optimization via Committee Consistency for Unsupervised Domain Adaptation

Viraj Prabhu (Georgia Tech)*; Shivam Khare (Georgia Institute of Technology); Deeksha Kartik (Georgia Institute of Technology); Judy Hoffman (Georgia Tech)

Many existing approaches for unsupervised domain adaptation (UDA) focus on adapting under only data distribution shift and offer limited success under additional cross-domain label distribution shift. Recent work based on self-training using target pseudolabels has shown promise, but on challenging shifts pseudolabels may be highly unreliable and using them for self-training may lead to error accumulation and domain misalignment. We propose Selective Entropy Optimization via Committee Consistency (SENTRY), a UDA algorithm that judges the reliability of a target instance based on its predictive consistency under a committee of random image transformations. Our algorithm then selectively minimizes predictive entropy to increase confidence on highly consistent target instances, while maximizing predictive entropy to reduce confidence on highly inconsistent ones. In combination with pseudolabel-based approximate target class balancing, our approach leads to significant improvements over the state-of-the-art on 27/31 domain shifts from standard UDA benchmarks as well as benchmarks designed to stress-test adaptation under label distribution shift.

Temporal Action Detection With Multi-Level Supervision

Baifeng Shi (UC Berkeley)*; Qi Dai (Microsoft Research); Judy Hoffman (Georgia Tech); Kate Saenko (Boston University); Trevor Darrell (UC Berkeley); Huijuan Xu (Pennsylvania State University)

Training temporal action detection in videos requires large amounts of labeled data, yet such annotation is expensive to collect. Incorporating unlabeled or weakly-labeled data to train action detection model could help reduce annotation cost. In this work, we first introduce the Semi-supervised Action Detection (SSAD) task with a mixture of labeled and unlabeled data and analyze different types of errors in the proposed SSAD baselines which are directly adapted from the semi-supervised classification literature. Identifying that the main source of error is action incompleteness (i.e., missing parts of actions), we alleviate it by designing an unsupervised foreground attention (UFA) module utilizing the conditional independence between foreground and background motion. Then we incorporate weakly-labeled data into SSAD and propose Omni-supervised Action Detection (OSAD) with three levels of supervision. To overcome the accompanying action-context confusion problem in OSAD baselines, an information bottleneck (IB) is designed to suppress the scene information in non-action frames while preserving the action information. We extensively benchmark against the baselines for SSAD and OSAD on our created data splits in THUMOS14 and ActivityNet1.2, and demonstrate the effectiveness of the proposed UFA and IB methods. Lastly, the benefit of our full OSAD-IB model under limited annotation budgets is shown by exploring the optimal annotation strategy for labeled, unlabeled and weakly-labeled data.

THDA: Treasure Hunt Data Augmentation for Semantic Navigation

Oleksandr Maksymets (Facebook AI Research)*; Vincent Cartillier (Georgia Tech); Aaron K Gokaslan (Cornell); Erik Wijmans (Georgia Tech); Stefan Lee (Oregon State University); Wojciech Galuba (Facebook); Dhruv Batra (Georgia Tech & Facebook AI Research)

Can general-purpose neural models learn to navigate? For PointGoal navigation (""go to x, y""), the answer is a clear `yes' -- mapless neural models composed of task-agnostic components (CNNs and RNNs) trained with large-scale model-free reinforcement learning achieve near-perfect performance. However, for ObjectGoal navigation (""find a TV""), this is an open question; one we tackle in this paper. The current best-known result on ObjectNav with general-purpose models is 6% success rate. First, we show that the key problem is overfitting. Large-scale training results in 94% success rate on training environments and only 8% in validation. We observe that this stems from agents memorizing environment layouts during training -- sidestepping the need for exploration and directly learning shortest paths to nearby goal objects. We show that this is a natural consequence of optimizing for the task metric (which in fact penalizes exploration), is enabled by powerful observation encoders, and is possible due to the finite set of training environment configurations. Informed by our findings, we introduce Treasure Hunt Data Augmentation (THDA) to address overfitting in ObjectNav. THDA inserts 3D scans of household objects at arbitrary scene locations and uses them as ObjectNav goals -- augmenting and greatly expanding the set of training layouts. Taken together with our other proposed changes, we improve the state of art on the Habitat ObjectGoal Navigation benchmark by 90% (from 14% success rate to 27%) and path efficiency by 48% (from 7.5 SPL to 11.1 SPL).

The Surprising Effectiveness of Visual Odometry Techniques for Embodied PointGoal Navigation

Xiaoming Zhao (University of Illinois at Urbana-Champaign)*; Harsh Agrawal (Georgia Institute of Technology); Dhruv Batra (Georgia Tech & Facebook AI Research); Alexander Schwing (UIUC)

It is fundamental for personal robots to reliably navigate to a specified goal. To study this task, PointGoal navigation has been introduced in simulated Embodied AI environments. Recent advances solve this PointGoal navigation task with near-perfect accuracy (99.6% success) in photo-realistically simulated environments, assuming noiseless egocentric vision, noiseless actuation, and most importantly, perfect localization. However, under realistic noise models for visual sensors and actuation, and without access to a “GPS and Compass sensor,” the 99.6%-success agents for PointGoal navigation only succeed with 0.3%. In this work, we demonstrate the surprising effectiveness of visual odometry for the task of PointGoal navigation in this realistic setting, i.e., with realistic noise models for perception and actuation and without access to GPS and Compass sensors. We show that integrating visual odometry techniques into navigation policies improves the state-of-the-art on the popular Habitat PointNav benchmark by a large margin, improving success from 64.5% to 71.7% while executing 6.4 times faster.

Waypoint Models for Instruction-Guided Navigation in Continuous Environments

Jacob Krantz (Oregon State University)*; Aaron K Gokaslan (Cornell); Dhruv Batra (Georgia Tech & Facebook AI Research); Stefan Lee (Oregon State University); Oleksandr Maksymets (Facebook AI Research)

Little inquiry has explicitly addressed the role of action spaces in language-guided visual navigation -- either in terms of its effect on navigation success or the efficiency with which a robotic agent could execute the resulting trajectory. Building on the recently released VLN-CE setting for instruction following in continuous environments, we develop a class of language-conditioned waypoint prediction networks to examine this question. We vary the expressivity of these models to explore a spectrum between low-level actions and continuous waypoint prediction. We measure task performance and estimated execution time on a profiled LoCoBot robot. We find more expressive models result in simpler, faster to execute trajectories, but lower-level actions can achieve better navigation metrics by approximating shortest paths better. Further, our models outperform prior work in VLN-CE and set a new state-of-the-art on the public leaderboard -- increasing success rate by 4% with our best model on this challenging task.

Workshops

2nd Workshop on Adversarial Robustness In the Real World

Yingwei Li, Adam Kortylewski, Cihang Xie, Yutong Bai, Angtian Wang, Chenglin Yang, Xinyun Chen, Yinpeng Dong, Tianyu Pang, Jieru Mei, Nataniel Ruiz, Alexander Robey, Wieland Brendel, Matthias Bethge, George Pappas, Philippe Burlina, Rama Chellappa, Dawn Song, Jun Zhu, Hang Su, Matthias Hein, Judy Hoffman, Alan Yuille

In this workshop, we aim to bring together researchers from various fields, including robust vision, adversarial machine learning, and explainable AI, to discuss recent research and future directions for adversarial robustness and explainability, with a particular focus on real-world scenarios.

AI for Creative Video Editing and Understanding

Fabian Caba, Yu Xiong, Alejandro Pardo, Anyi Rao, Qingqiu Huang, Ali Thabet, Dong Liu, Dahua Lin, Bernard Ghanem

This workshop is for the 1st installment of the AI for Creative Video Editing and Understanding (CVEU). The workshop brings together researchers working on computer vision, machine learning, computer graphics, Human Computer Interaction, and cognitive research. It aims to bring awareness of recent advances in machine learning technologies to enable assisted creative-video creation and understanding. Irfan Essa will deliver a keynote on "AI for Video Creation."

LVIS Challenge Workshop

Ross Girshick, Agrim Gupta, Achal Dave, Bowen Cheng, Alexander Kirillov, Daniel Bolya, Judy Hoffman, Piotr Dollár

Today, rigorous evaluation of general purpose object detectors is mostly performed in the limited category regime (e.g. 80) or when there are a large number of training examples per category (e.g. 100 to 1000+). LVIS provides an opportunity to enable research in the setting where there are a large number of categories and where per-category data is sometimes scarce. Given that state-of-the-art deep learning methods for object detection perform poorly in the low-sample regime, we believe that our dataset poses an important and exciting new scientific challenge.

Web Design and Data Graphics: Joshua Preston, Research Communications Manager